The Cookie Pool: Polluting the Surveillance Economy from the Inside

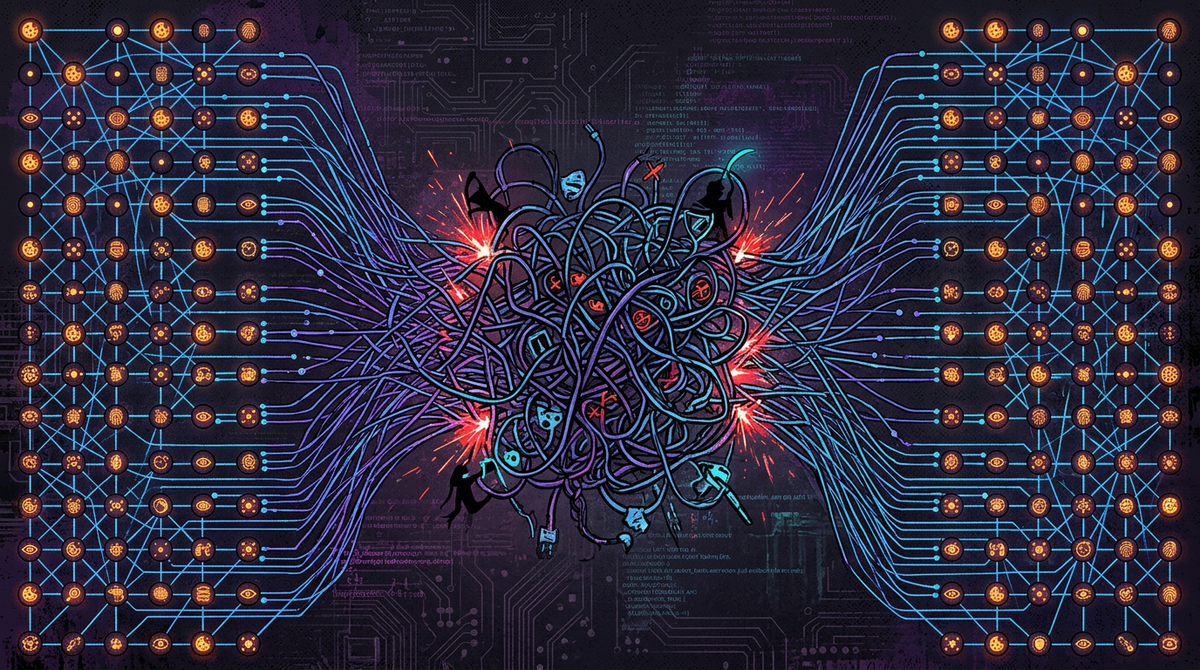

Cookie consent is a farce. Here's a different approach: don't block tracking cookies — pollute them. A communal exchange pool that turns your surveillance profile into a statistical blur of a thousand different people.

Cookie consent is a farce.

GDPR was supposed to give people control over their data. What we got instead was a decade of dark patterns: consent banners engineered to exhaust you into clicking "Accept All." Reject buttons buried three menus deep. "Legitimate interest" — a legal clause so deliberately vague it lets companies track you without consent and call it paperwork. And the crowning insult: sites that remember you said yes for years, but forget you said no the moment you close the tab.

The companies are following the rules. They're just following them in the worst possible faith.

So here's an idea. Instead of trying to opt out, what if we made the data worthless?

The Concept: Cookie Pool

Tracking cookies work because they're personal. A tracker plants an ID on your device, sees you across sites, and builds a profile — your habits, your interests, your vulnerabilities, your price sensitivity. The ID only has value because it consistently refers to you.

What if it didn't?

The Cookie Pool is a voluntary, communal tracking cookie exchange.

Here's how it would work:

-

Identify and separate — A browser extension scans your cookies and classifies them. Functional cookies (session tokens, login state, preferences) stay local and private. Tracking and advertising cookies are isolated.

-

Contribute to the pool — A random ~10% of your tracking cookies are anonymised, stripped of anything personally functional, and contributed to a shared peer-to-peer pool. Think BitTorrent but for surveillance identifiers.

-

Pull from the pool — In exchange, your browser silently pulls a random 10% of tracking cookies from other pool participants and stores them locally.

-

Repeat on a rolling basis — The swap runs periodically. Your tracking profile is now a chimera — part you, part a retiree in Düsseldorf, part a student in Lisbon, part whoever else is in the pool.

The trackers still see consistent cookie IDs. The consent banners still fire. Nothing is blocked, nothing is broken. But the profile they're building is meaningless — a statistical blur of hundreds of different people.

Why This Is Interesting

Most privacy tools work by subtraction: block the tracker, delete the cookie, refuse consent. These approaches work, but they're adversarial in a way that's easy to detect and route around. A browser with no third-party cookies looks different from everyone else. Fingerprinting fills the gap.

The Cookie Pool works by pollution rather than refusal. The tracking infrastructure keeps running. The data keeps flowing. It just becomes garbage at scale.

This is also collectively effective in a way that individual privacy tools aren't. One person using an ad blocker hurts one person's ad profile. Ten million people in a cookie pool degrades the entire surveillance graph. The value of tracking data is in its accuracy — corrupt that at scale and the economics stop working.

Technical Shape of the Thing

This is a rough sketch, not a spec. But the components are all buildable:

- Browser extension — handles local cookie classification, pool contribution/retrieval. The hardest part is the classification heuristic: distinguishing

_ga(tracking) fromsession_id(functional). Doable with a combination of known-tracker lists (e.g. EasyPrivacy) and heuristics. - P2P pool — no central server needed, no single point of failure or legal liability. WebRTC data channels or a DHT (distributed hash table) could handle the exchange. The pool doesn't need to store cookies — just relay them.

- Anonymisation layer — before contribution, strip any cookie value that contains identifiable structure (email hashes, user IDs). Contribute only the opaque tracking tokens.

- Rotation schedule — swap a small percentage frequently rather than a large batch infrequently. Gradual corruption looks more like normal browsing variance than an obvious attack.

The Questions Worth Asking

Is this legal? Genuinely unclear, and that's interesting. You're not accessing anyone else's accounts. You're not breaking anything. You're sharing strings of text that were placed on your device by third parties who didn't ask your permission. The legal novelty here is part of the point.

Does it actually work at scale? It's a numbers game. A pool of 1,000 users probably doesn't do much. A pool of 1 million users makes tracking unreliable. Ten million makes it useless. The network effect is the whole thing.

Can trackers detect and filter for it? Probably, eventually. But that's a cat-and-mouse game that costs them money and engineering time. That's a fine outcome.

Why Bother

Because the current situation is one where billions of people nominally have rights that the industry has spent a decade engineering around. GDPR without enforcement is theatre. Consent without real choice isn't consent.

I'm not naive enough to think a browser extension fixes surveillance capitalism. But I do think tools that make tracking data expensive to maintain and unreliable to use are worth building. Not because they'll win. Because they'll make the economics hurt a little.

That's enough.

This is a concept sketch, not a finished proposal. If you're a privacy researcher, a browser extension developer, or someone who thinks this is interesting and stupid in equal measure — [get in touch].